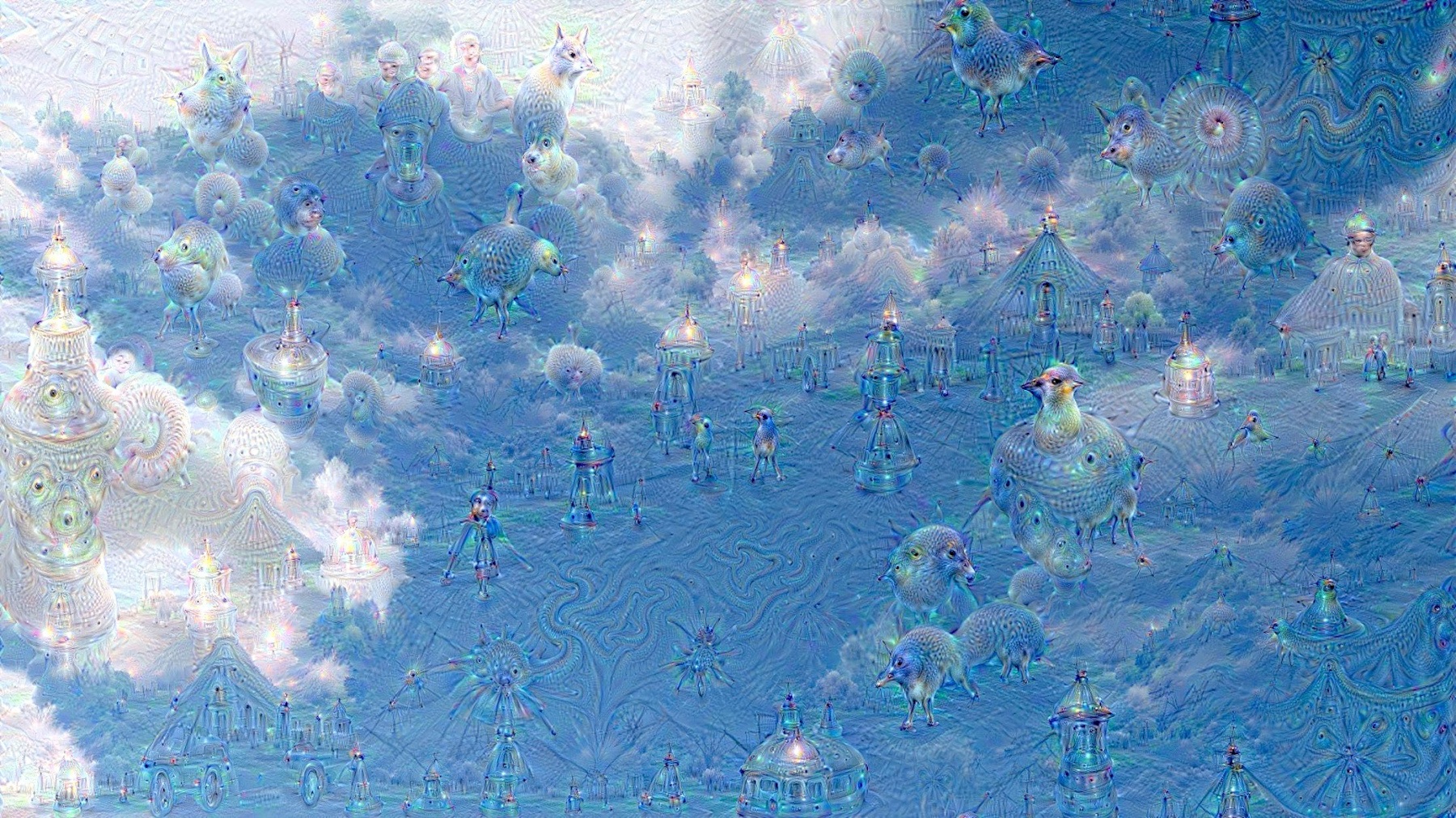

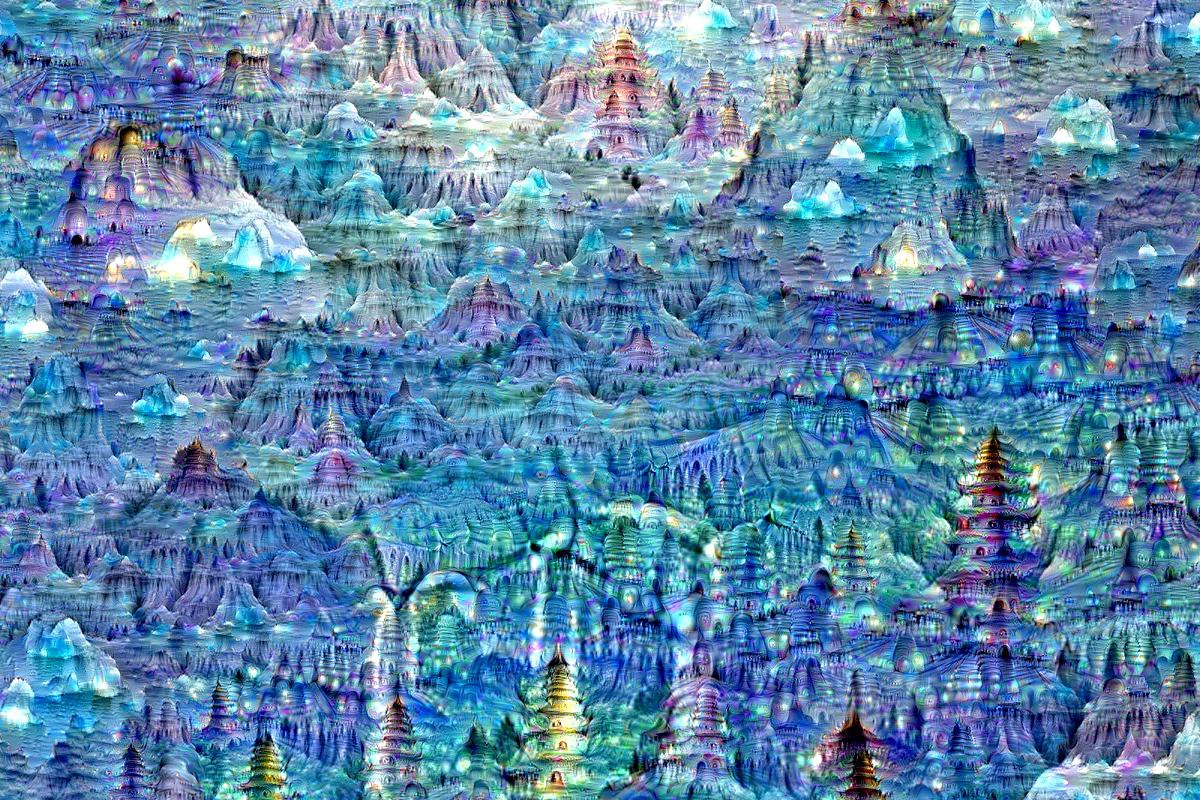

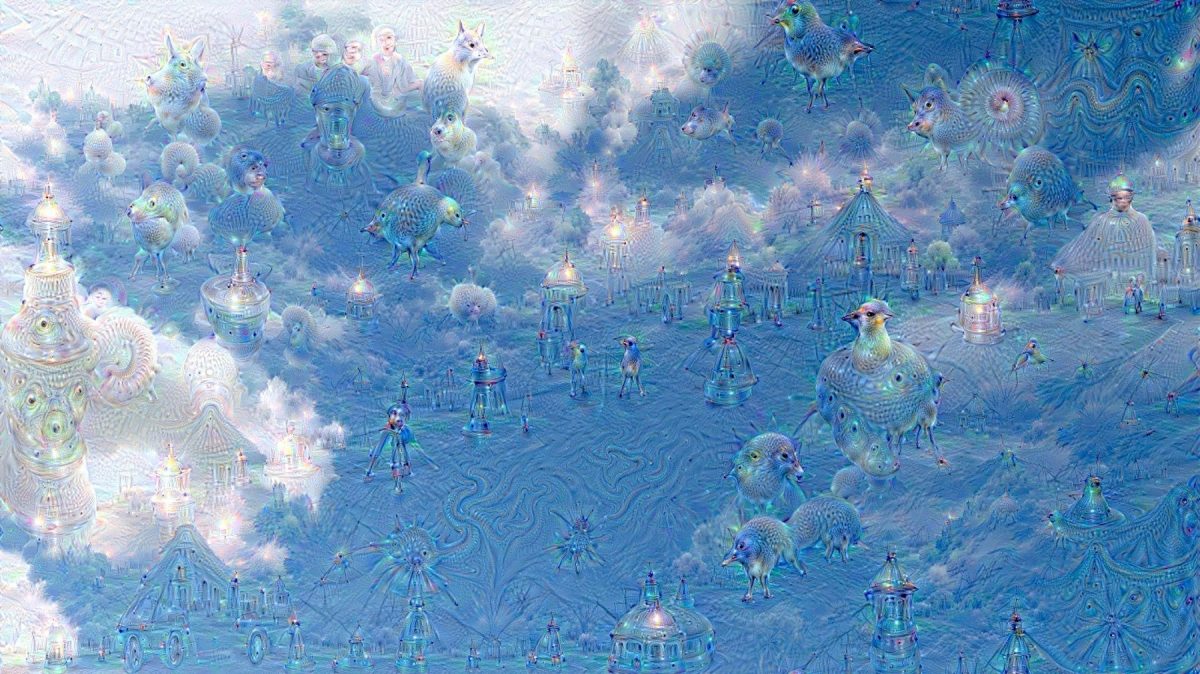

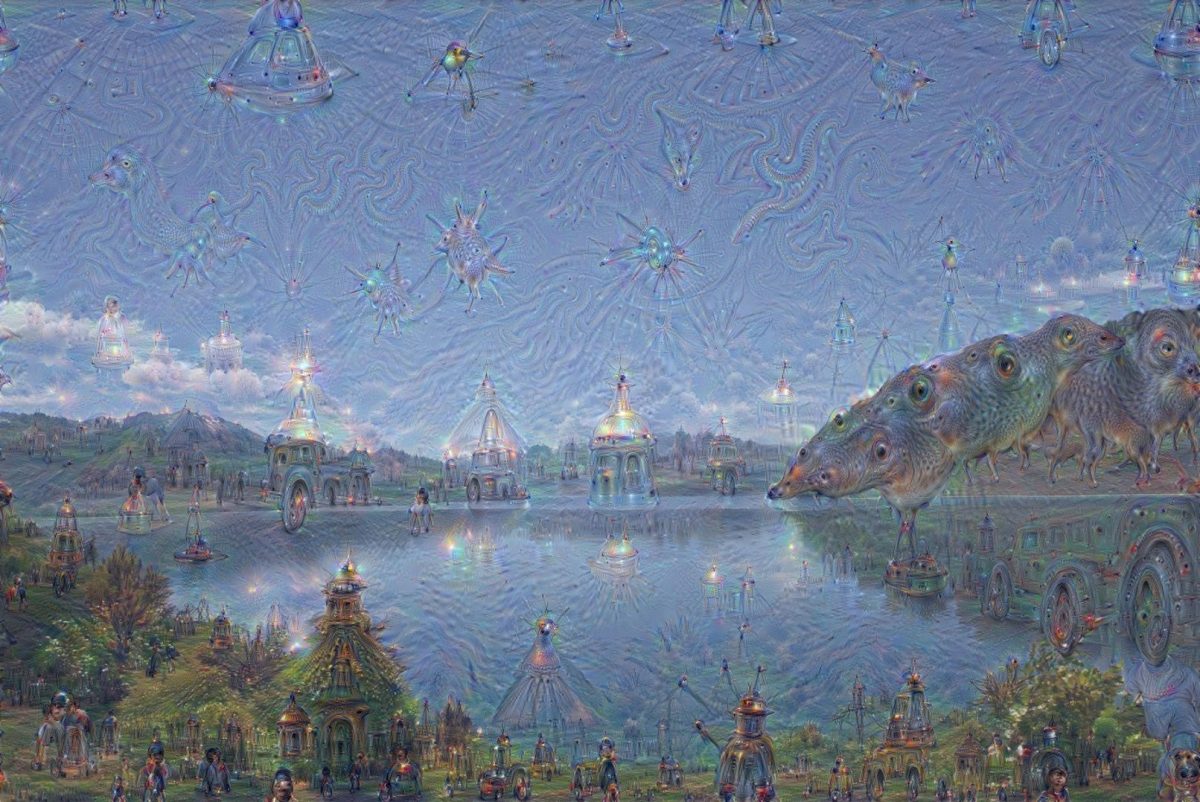

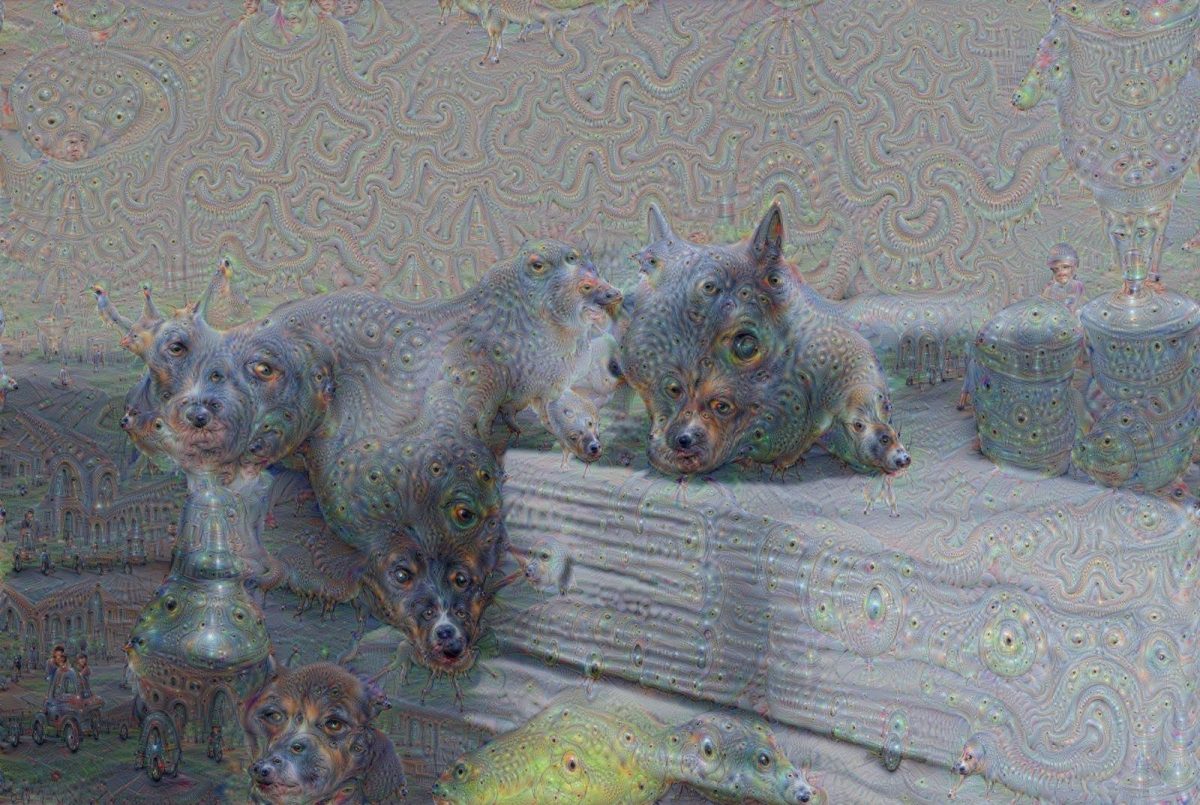

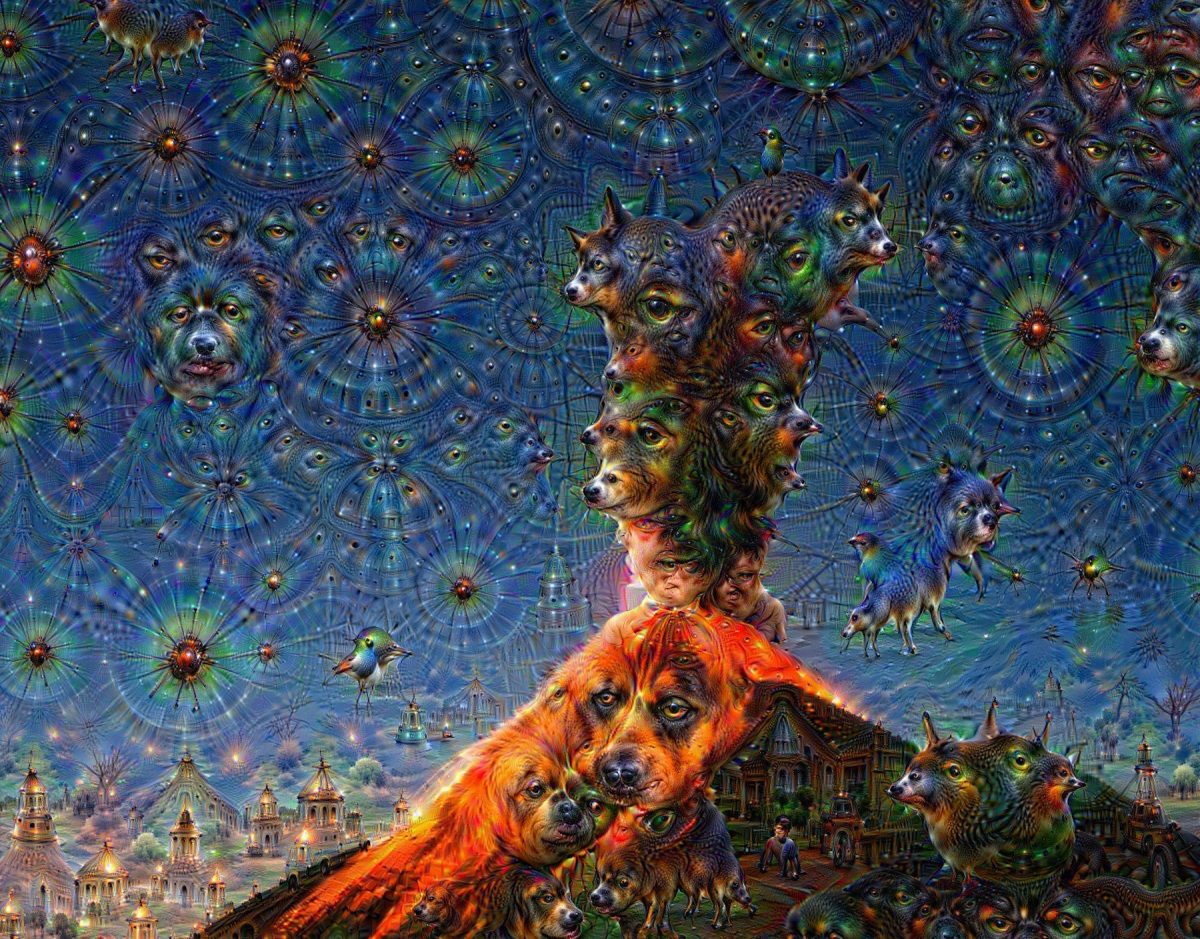

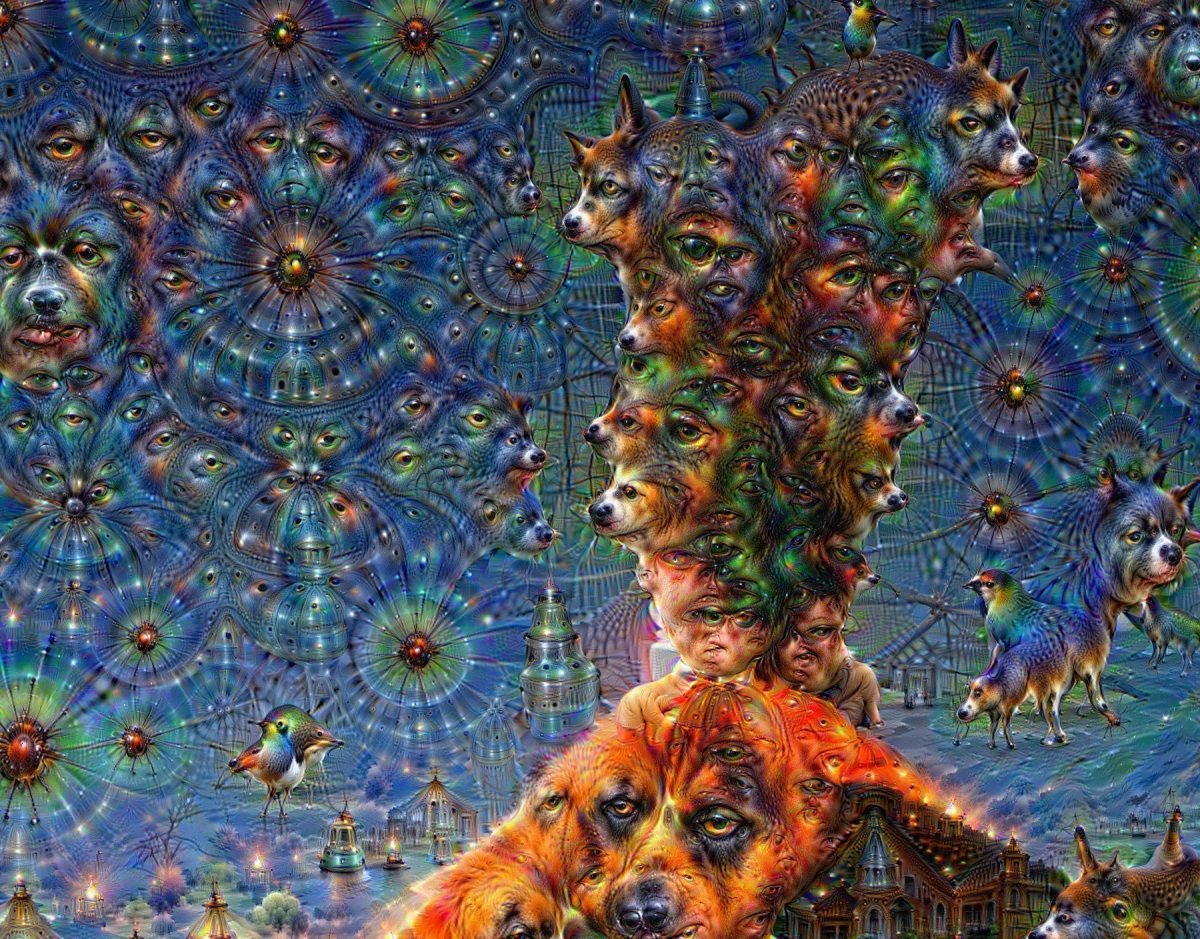

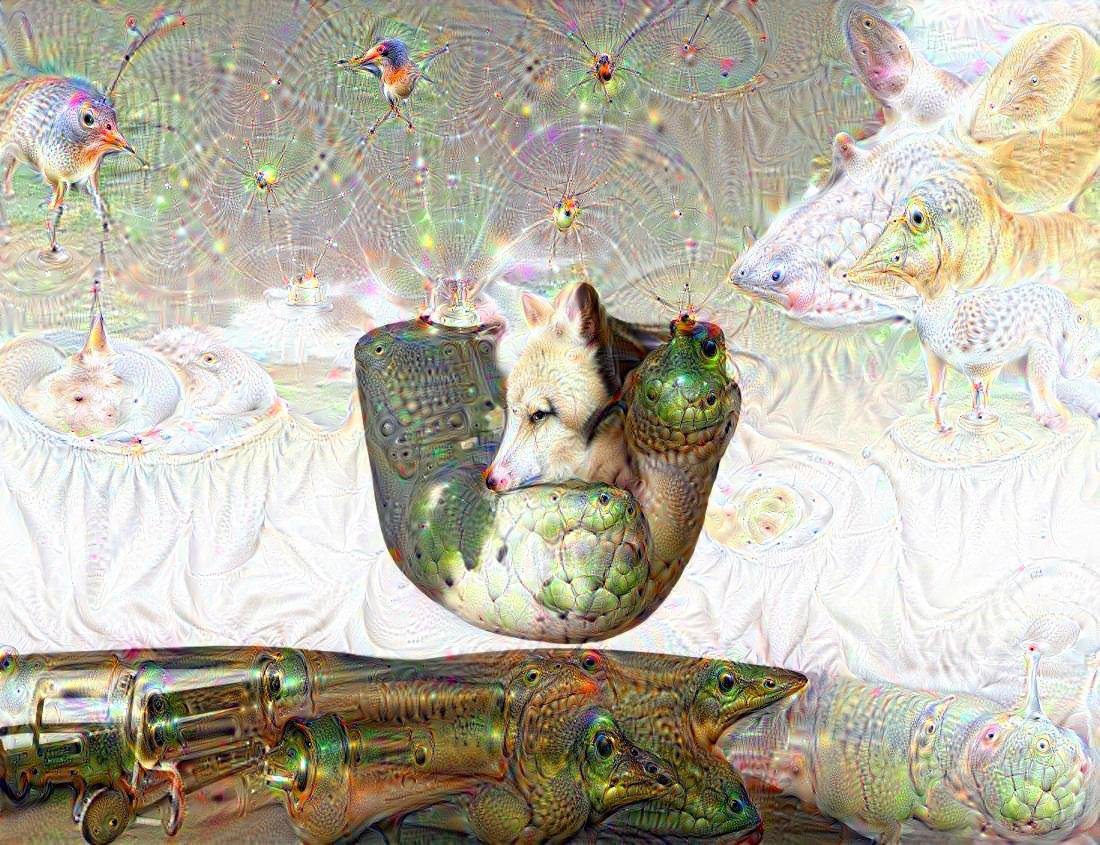

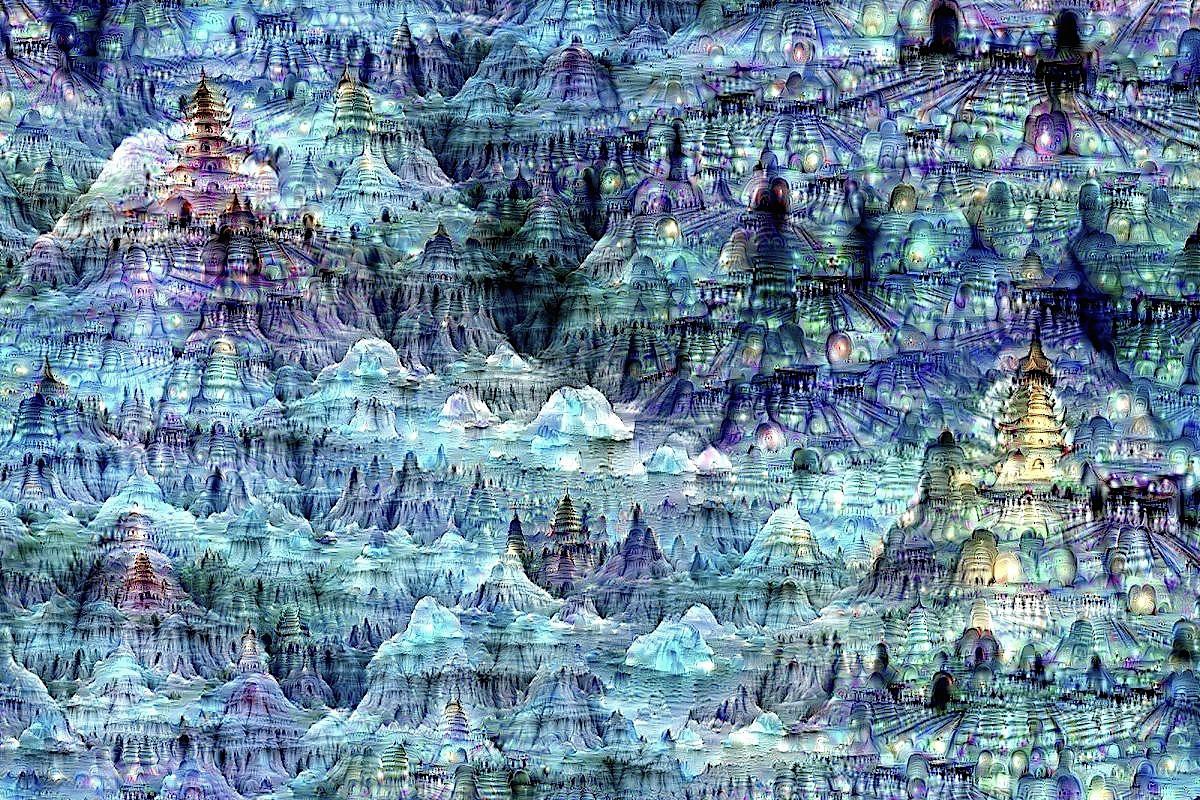

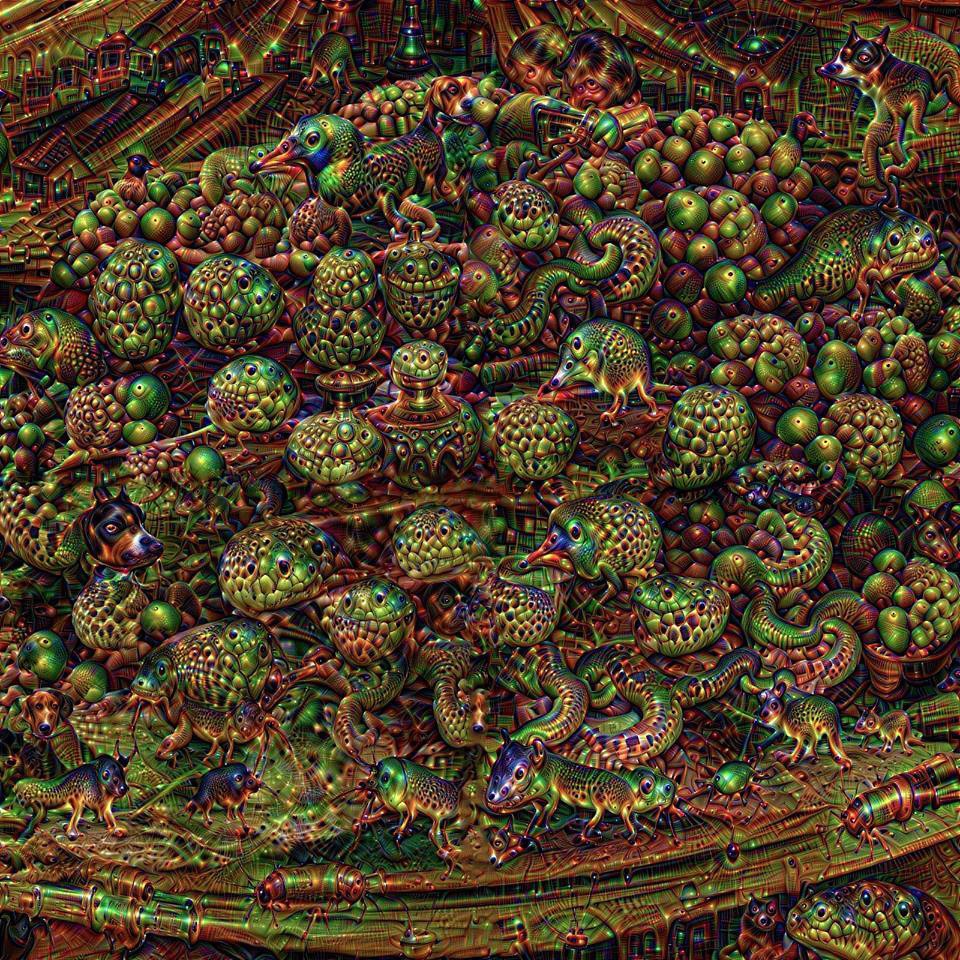

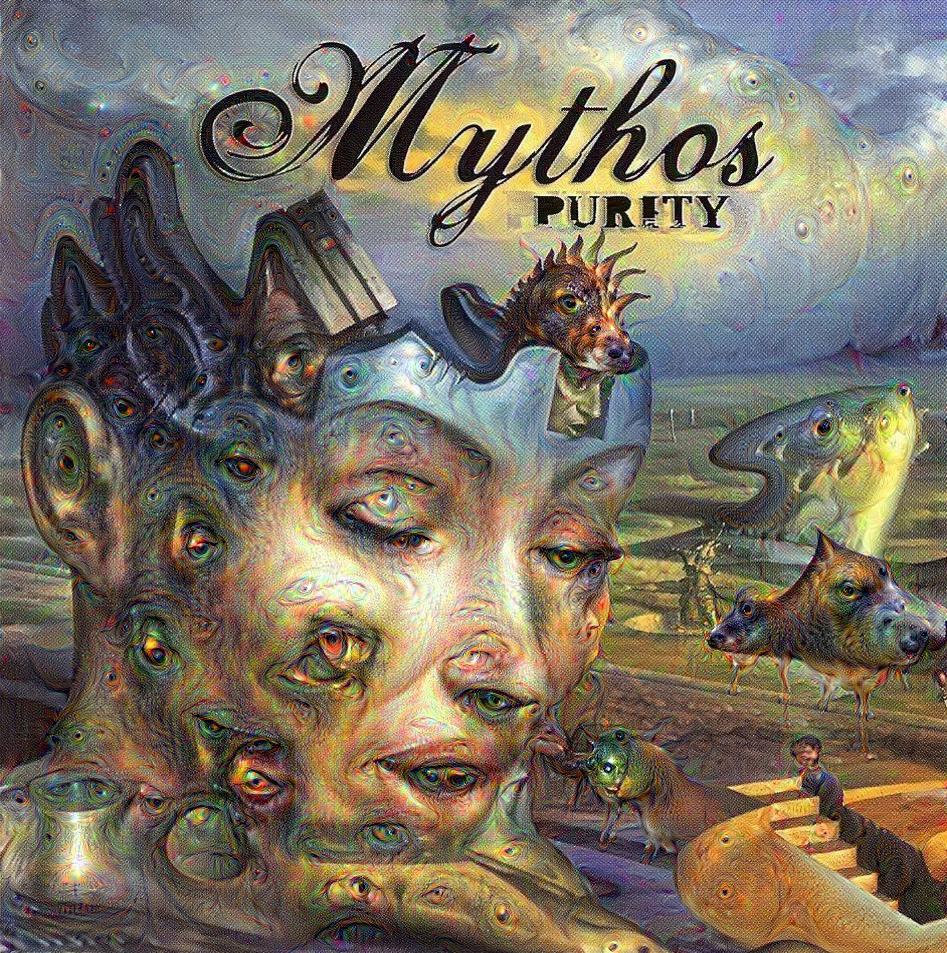

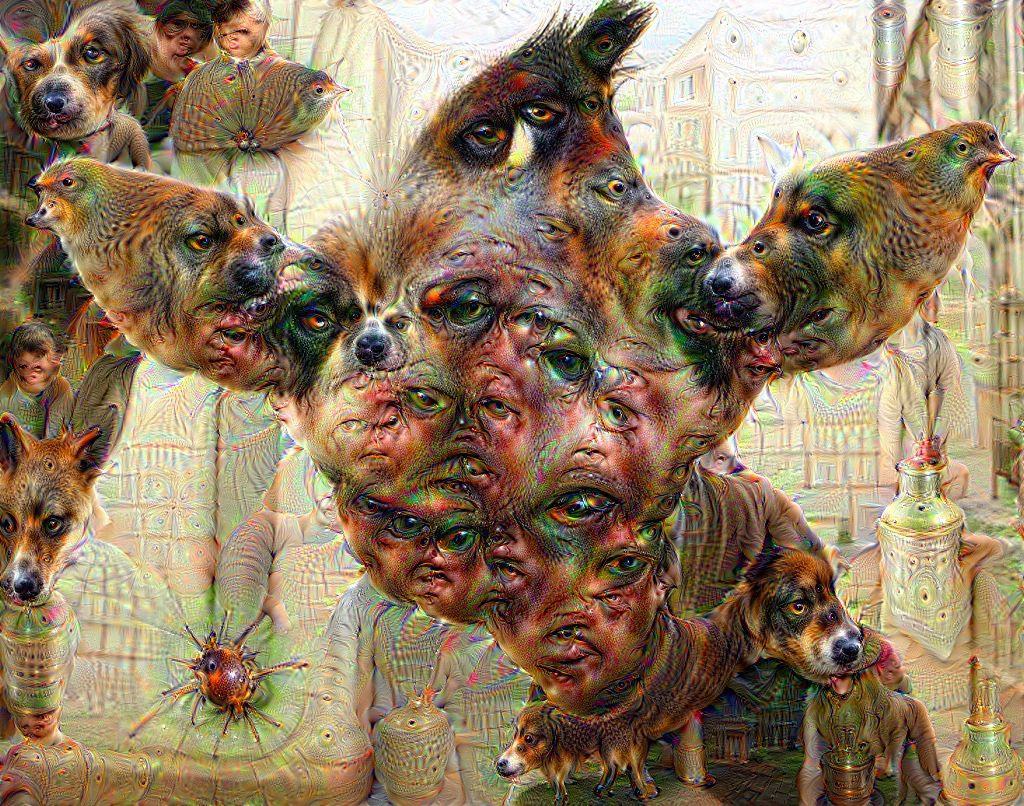

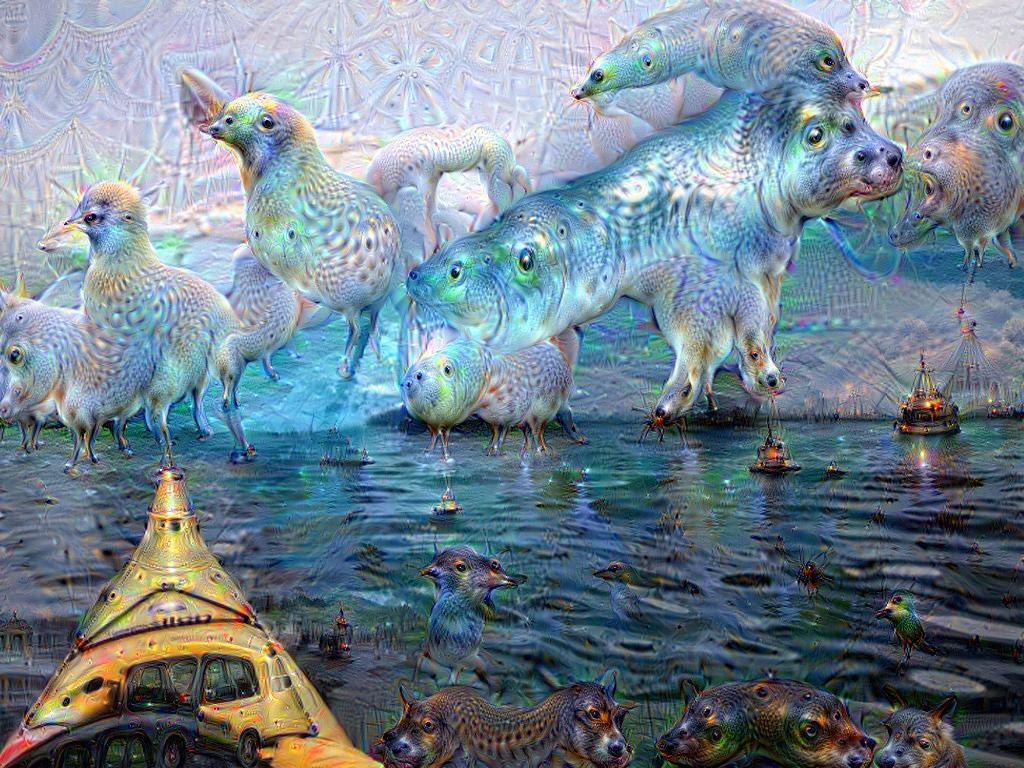

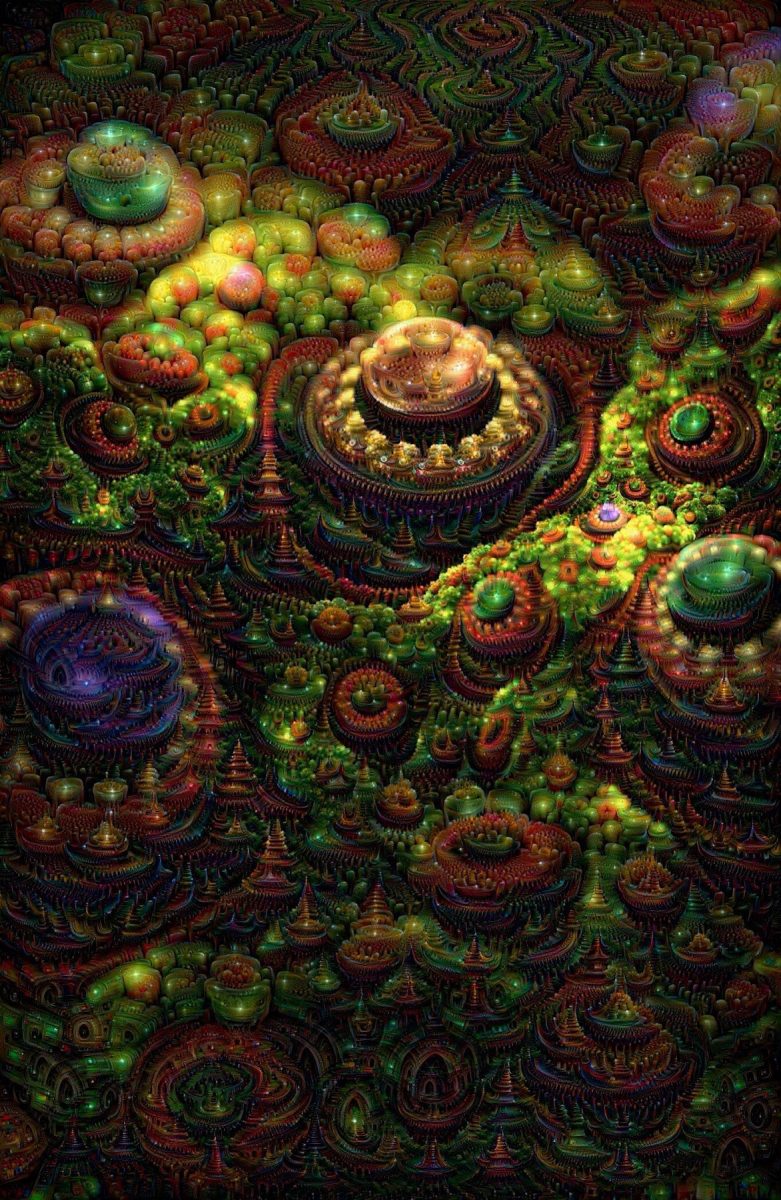

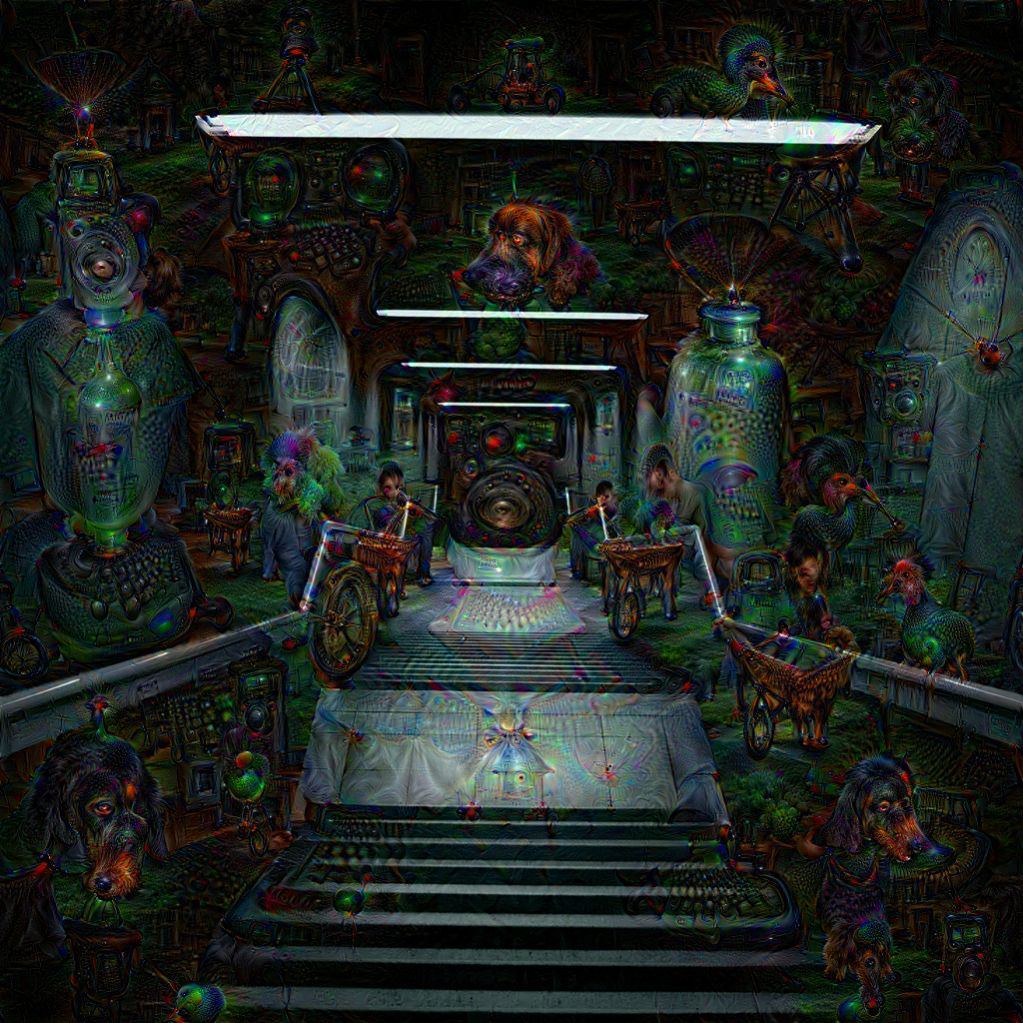

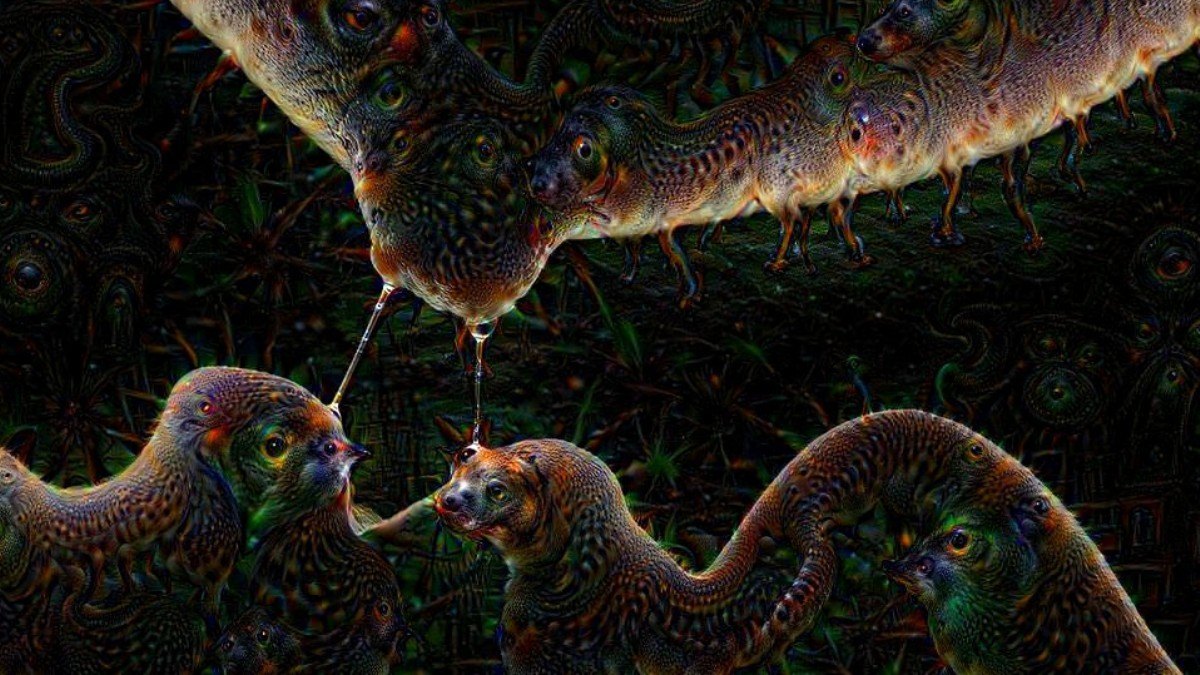

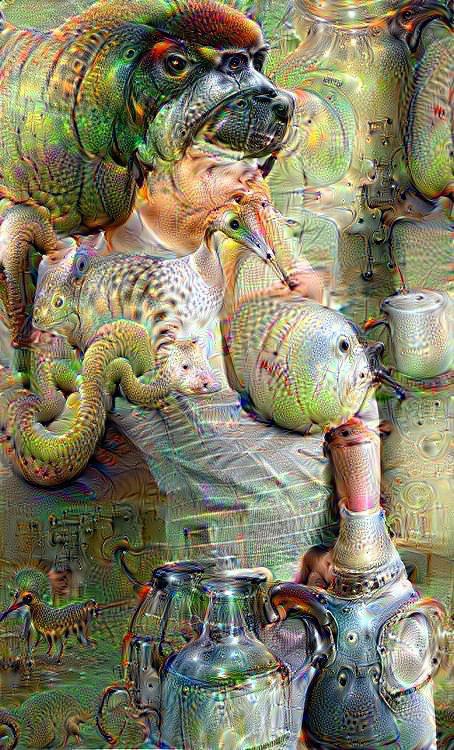

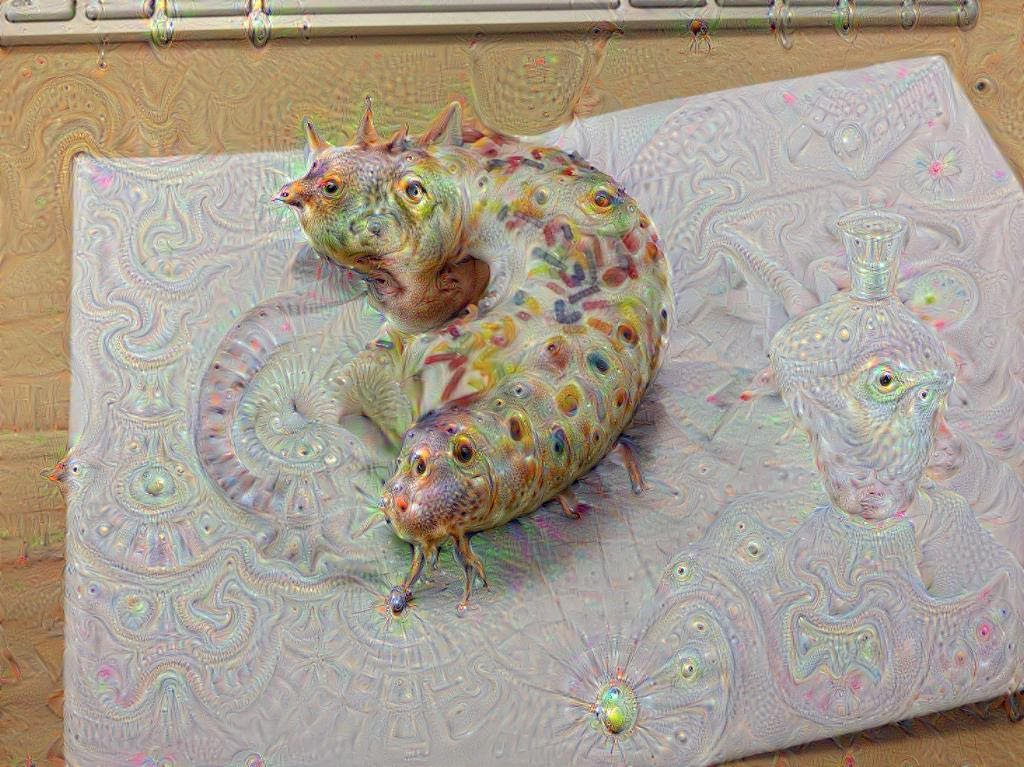

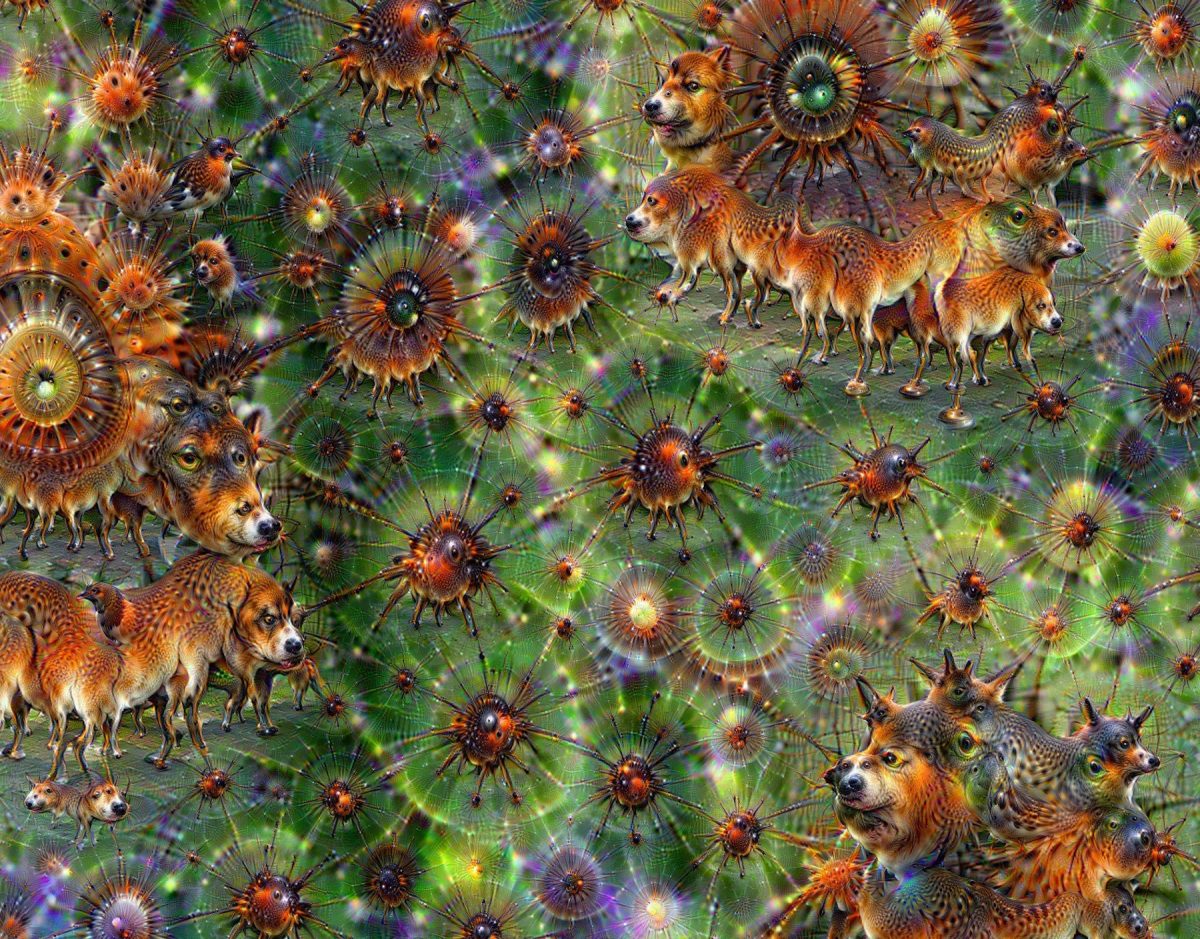

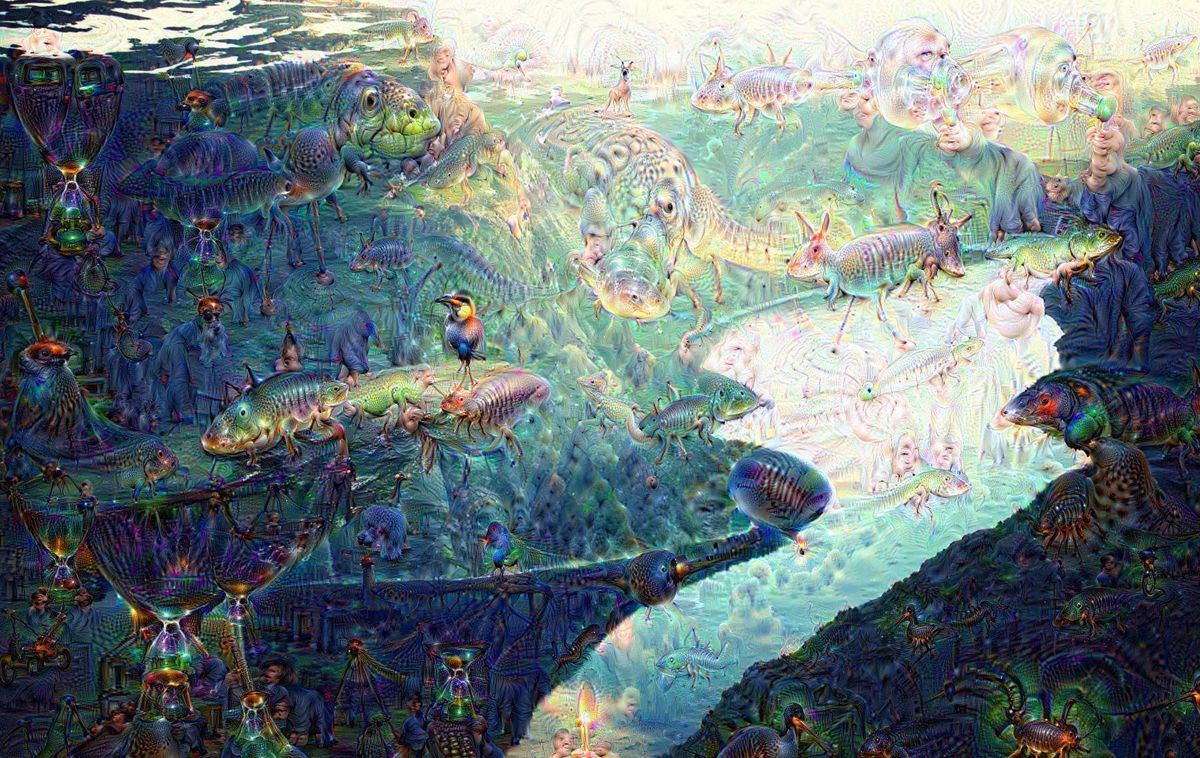

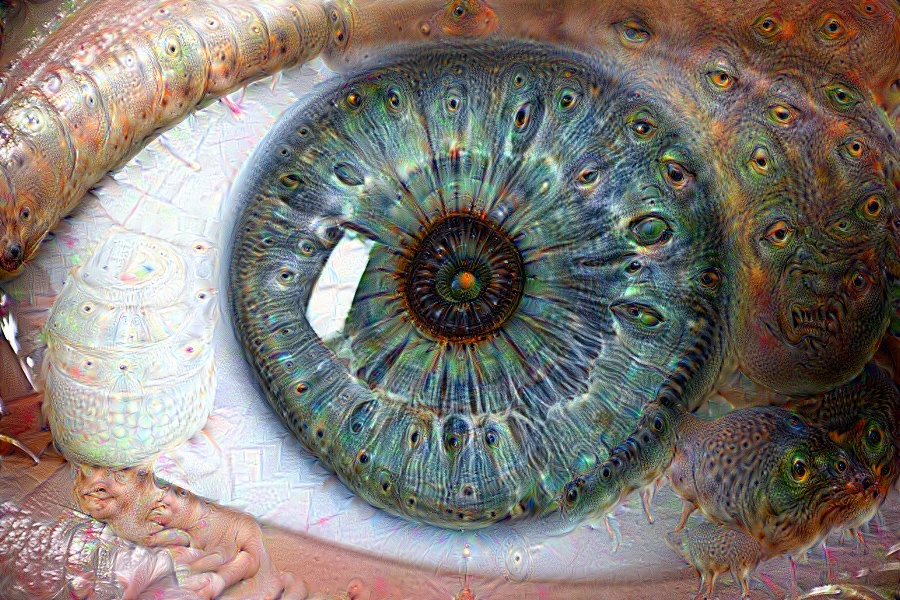

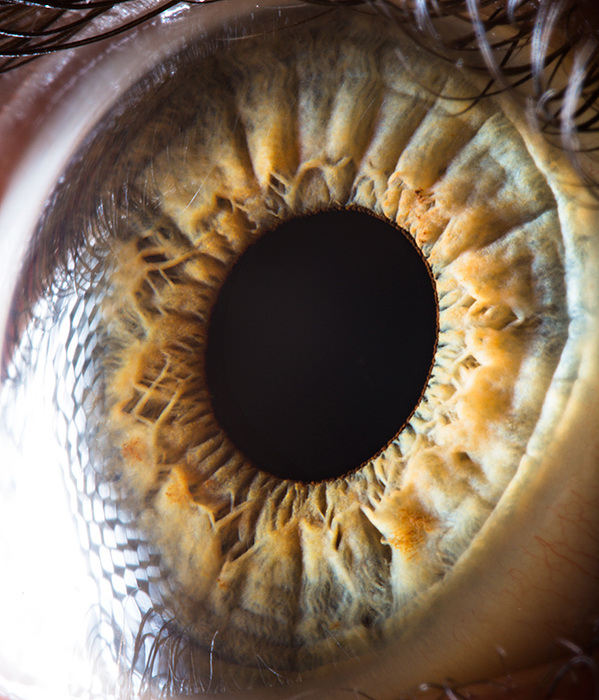

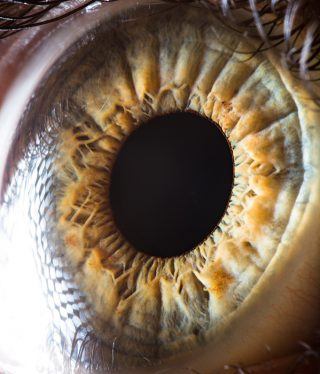

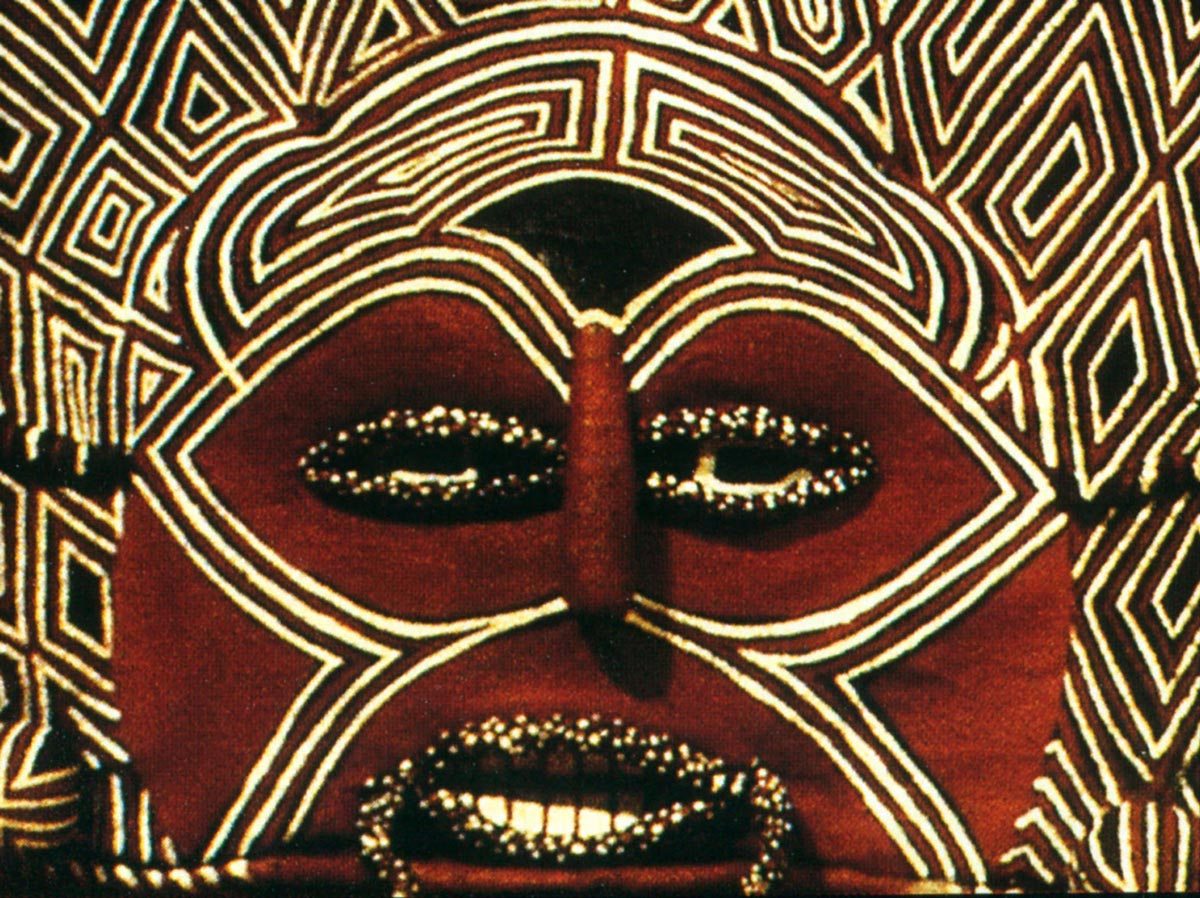

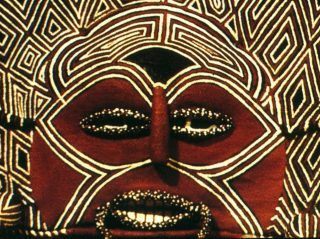

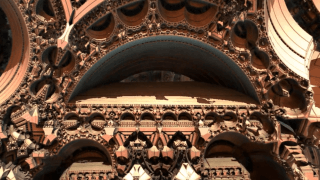

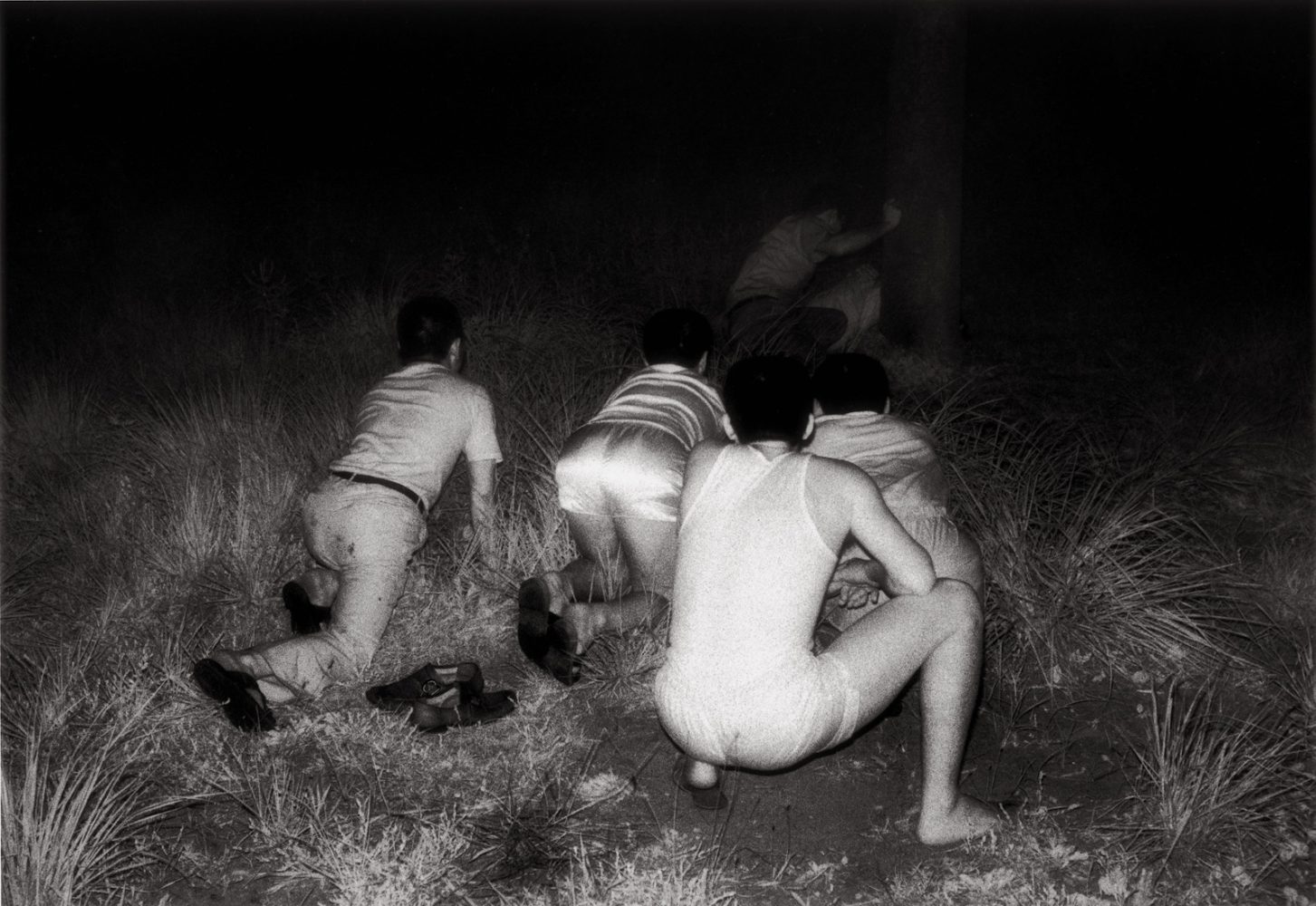

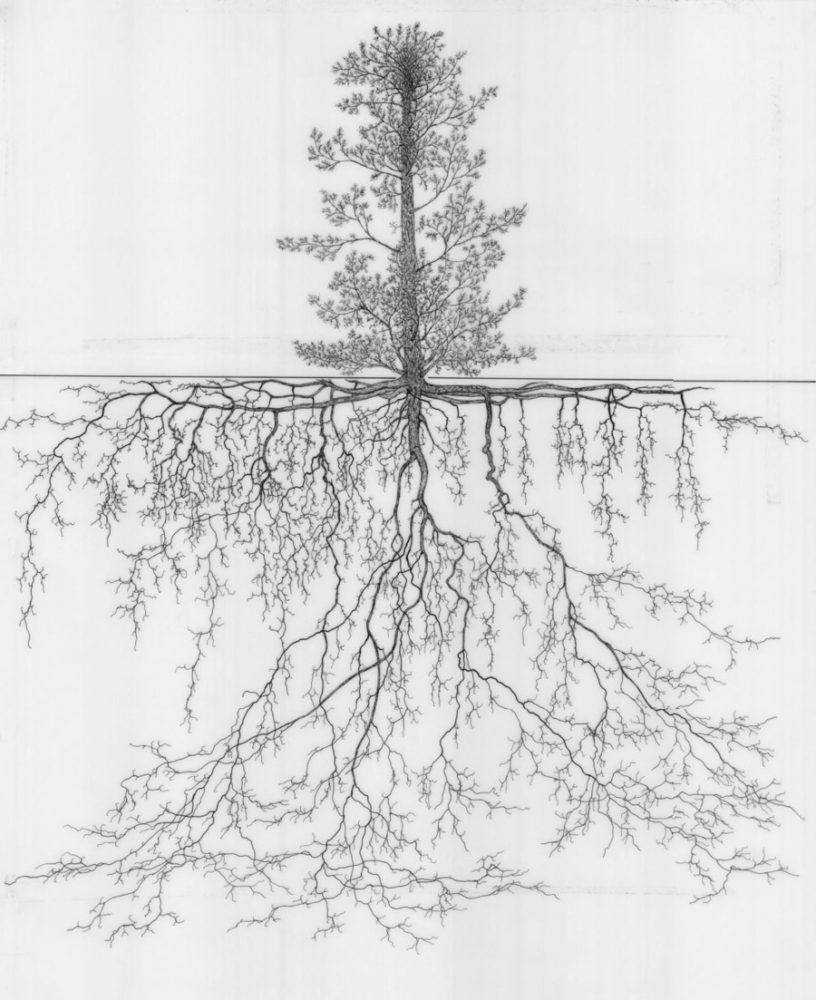

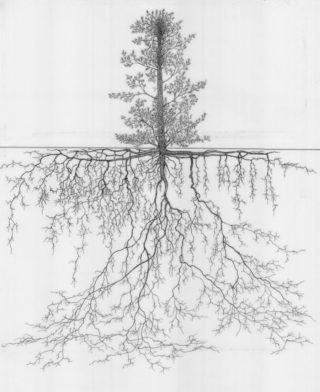

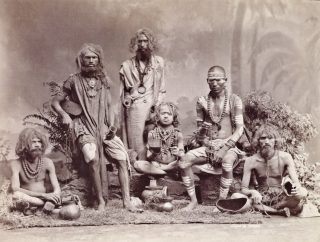

In June 2015 Google engineers released a couple of images that caused a stir for everyone who’s able to grasp only the basics of what’s going on here. The DeepDream algorithm shows us quite plainly how perception works. You can only see what you were trained to see. In the case of Google’s AI a lot of dogs obviously.

The pictures are the result of an experiment with Google’s image recognition network, which has been “taught” to identify features such as buildings, animals and objects in photographs.The process is called Deep Learning which is a new field within Machine Learning. The researchers feed a picture into the artificial neural network, asking it to recognise a feature of it, and modify the picture to emphasise the feature it recognises. That modified picture is then fed back into the network, which is again tasked to recognise features and emphasise them, and so on. Eventually, the feedback loop modifies the picture beyond all recognition.

Read the original article from Google with more details on the process:

Inceptionism: Going deeper into neural networks

And a couple of pictures in high-res for a better experience:

1 2 3 4 5

Deep Dream in motion: Grocery Trip